Physical-layer Trust: Measuring Embodied Interaction in Distributed Robotic Systems

Author: Suet Lee, 10.03.2026

Multi-robot distributed systems no longer exist purely in the realm of science fiction. There is real demand from the commercial sector for applications including: disaster recovery and emergency response, security-related site mapping and surveillance, planetary scouting and space exploration, and industrial infrastructure inspection. The exact composition of robot teams may vary from a single drone operating with multiple quadrupeds, to a pair of robots working in tandem. These multi-robot teams cooperate to complete navigation, planning and mapping tasks. Depending on the scenario, tasks may require explicit and high-bandwidth communication (semantic mapping using data from sensor fusion) or minimal communication may be sufficient (robot swarms for area coverage in search and rescue). What is clear, is that ensuring the trustworthiness of these systems is a critical first step to enabling the real-world deployment of distributed robot systems.

So, that’s it, the goal: to enable teams of robots to operate in verifiably trustworthy ways. Ensuring that robot systems are safe and secure is going to be key to their integration in the real-world. As part of Aria’s Trust Everything Everywhere program (TEE), I’ve been exploring the synergies between cryptography and physical-layer computation to measure trust in distributed robot systems. It’s been a great space to learn from experts coming from different backgrounds.

In this blog post, I’ll be sharing lessons learned in this project coming from a background in swarm robotics research and working with the Autodiscovery team with their expertise in real-world deployment of robotic systems. These lessons point the way to what could be promising routes towards ensuring trust in robot systems everywhere.

What this blog post covers:

- A brief background to swarms and distributed robots

- Trust, security and current approaches

- Physical-layer measurements for trust

- Towards protocols for trust

- Towards subjective trust

Part 1: Swarms and distributed robot systems

Distributed robot systems find applications across many scenarios: for example, construction, transport delivery, agriculture, space exploration, and environmental monitoring[1]. They may consist of multiple different types of robots, with different roles and capabilities. A more futuristic system is a modular robot composed of components which may be attached and detached according to the desired functionality. Robot swarms are a special type of distributed robot system:

Swarm robotics is the study of multi-robot systems that exhibit global emergent behaviours through local environmental and robot-robot interactions[2].

Swarms are fascinating systems: loosely defined, they’re groups of agents which produce complex and emergent behaviours without centralized control or global knowledge. This means that agents act based only on the local information they have available. The phenomena of emergent collective behaviour can be observed in biological and physical systems on a wide range of scales: think colonies of ants or bees, or even human societies. There is no central brain directing each individual in their actions and choices - yet somehow, the collective of individuals is able to produce complex behaviours like foraging and nest-building, rich systems of culture and technology.

A flock of birds, a murmuration, captured in film; "The art of flying" by Jan van Ijken.

One challenge for researchers studying these systems is to understand how emergent behaviour arises from local interactions between individuals.

Other characterising properties of swarms are[3]:

- Flexibility/adaptability: the swarm is able to adapt to changes in the environment and produces different solutions for tasks.

- Scalability: the swarm behaviour is preserved as the number of the robots increases.

- Robustness: the swarm is resilient to failures and can continue operation despite their presence, possibly at a lower level of performance.

Swarms are often assumed to be homogeneous, where all robots are identical - in both morphology and the control algorithm. This assumption and the above characterising properties describe a more traditional robot swarm. Such properties can be leveraged to solve real-world problems where the environment is noisy and complex. For example, where 1) network connectivity is not guaranteed (e.g. in remote locations), 2) the environment is hazardous/complex/dynamic (e.g. human environments), or 3) for long-term operation where faults will occur over time.

The power in swarms, and distributed robots more generally, is that as a collective they are able to complete tasks that a single robot could not, or complete them much more effectively. In some scenarios, it may be desirable for robots to team up in an ad-hoc manner (and disband again afterwards). The robots may be operated by different corporations. In this case, ensuring that robots working together can trust each other will be necessary. There are two points that we need to consider more carefully here: first, what assumptions are we making about the robot system (e.g. degree of heterogeneity, type of communications) and second, what do we actually mean by trust?

Part 2: Cryptography and trusted systems

Trust is multi-faceted: it’s composed of complex and intersecting factors. These include:

- Safety

- Security

- Proficiency

- Explainability

- Predictability

In this project, we are not constraining the definition of trust to any one factor, although there is a focus on security and safety. Instead the aim is to understand how we can measure and quantify trust more broadly by considering the physical layer of the system - more on this later. By quantifying trust, we can provide guarantees about the behaviour of a system and make sure that it’s doing what we want and expect. There are two layers of trust we are interested in: trust between robots, and trust between humans and robots.

Artificial systems can be a black box of sorts. We usually have a mental model for biological systems and other human beings which allow us to predict their next moves and interpret their behaviour. We tend to anthropomorphize robots, for example, by projecting our own mental models onto them. This has a direct impact on perceived trust (from the human perspective).

In a distributed robot system, the question of scaling trust comes into play. Trust in a single robot looks different to trust in a large number of robots. What’s more, distributed systems may be tightly coupled (e.g. robots explicitly coordinating movement) or loosely coupled (e.g. decentralized control in swarms). Multiple moving robots can produce effects of cognitive overload on human observers. Emergent behaviours in swarms can also be hard to predict, even knowing the underlying individual behaviours. This directly impacts the perception of trust.

From a security perspective, the security considerations for distributed networked systems may also extend to robot systems. For example, in securing communication channels between robots to prevent eavesdropping. Or in an ad-hoc teaming scenario, in authenticating a robot before allowing it to join the team.

More specifically, some examples of vulnerabilities[4]:

- Jamming: overwhelms wireless communication channels with interference.

- Impersonation: signals can be intercepted and identity information stolen.

- Malware: attackers may plant this in compromised robots, which may spread through the system.

- Consume resources: force a robot to rapidly reconnect its communications repeatedly.

- Tampering: disrupting data transmission by tampering with routing information.

The same characteristics of swarms (and distributed robots more generally) which may be advantageous for real-world operation (e.g. robustness), also present challenges for established cryptographic methods:

- Dynamic networks: robots may be in constant motion requiring changing connections to a base station (for example) and continuous authentication.

- Multi-hop communication: may assume that robots in the chain are trusted.

- Limited onboard resources: e.g. energy and compute - lightweight security is advantageous.

- Scalability: methods may need to scale for very large numbers of interacting, connected robots.

An emerging research direction for securing distributed robots is in the application of blockchain technology[5]. These approaches may rely on social comparison of observations (e.g. for environmental monitoring) made by individual robots, in order to flag faulty or malicious robots. Methods for fault detection may also be applicable for detecting malicious behaviour - the focus being to detect anomalous patterns of behaviour deviating from the expected[6].

There is one underexplored theme in this discussion: how to leverage the information generated by the interaction between robots (and with their environment) in order to verify trustworthiness and detect malicious behaviour. The interaction between multiple robots in distributed systems can produce non-linear, unpredictable behaviour. What if we can leverage this non-linearity for cryptographic applications and to secure the system itself? Another direction to consider is the situatedness of robots in the environment which can provide physical fingerprints which are difficult to manipulate by attackers. How are the signals observed from the physical layer of the system (hardware, environment, etc.) transformed through robot interactions?

Part 3: Measurements in the physical layer

First let’s determine what can be measured or observed in a distributed robot system. Observations can be made for any sensors the robot has onboard. It can also monitor internal processes in software, and monitor hardware e.g. wheel encoders. Some observable signals are:

- Motion (via cameras, infra-red sensors)

- Radio frequency

- Light

- Sound

- Forces

- Power (current, voltage)

The question we focus on is: how does interaction with the environment and between robots transform these signals and how can this information be used to measure trust?

Motion might be the most intuitive interaction signal. For subjective perception of motion, body language in animals acts as a visual cue for physiological/emotional states. The motion of a swarm may be perceived as trustworthy, or not, depending on various factors such as predictability of movement, complexity, and speed (see [7]). From a quantitative perspective, the motion of a swarm can be verified against a model of its expected behaviour using macroscopic metrics such as average speed of robots and density (see [8]). While these metrics provide some guarantees that the swarm is operating as intended, they may not be unique (as in different inputs may produce the same output) and therefore such a fingerprint may have low security strength.

Radio frequency (RF) is ubiquitous signal in communication between networked robots. It’s also vulnerable to interception and jamming by malicious agents. One example of exploiting RF in security uses geometric measurements of RF signals to verify a legitimate source in a Sybil attack scenario (spoofing client RF signals). The authors leverage the physical properties of RF in their solution, whereby the signals are reflected and propagate in the environment. Light and sound may be similarly leveraged, although these signals are more prone to dissipation and interference in the physical world.

When considering distributed robot systems such as modular robotics, where a robot may be composed of modular components, the final two observables may be most relevant. In modular robotics, the components use wired connections for power and usually for communication. They may be connected by deformable materials where tensile forces may be measured. The power usage across the system can also be measured. These signals, distributed across components, are explicitly coupled.

So why measure interactions of physical-layer signals?

The work by Gil et al. lists advantages for RF signals[9], which can also extend to other types of signals:

- Capture directional information.

- Can be obtained from a single point of observation (i.e. doesn’t require triangulation).

- Cannot be manipulated by attackers (since the interaction depends on physical properties of the environment which are not easy to replicate).

- Applicable in complex multipath environments where the signal is scattered - scattered signals manifest as “measurable peaks in the fingerprint” contributing to fingerprint uniqueness.

Points 3 and 4 are of particular interest: they confer desirable properties of tamper-resistance and fingerprint uniqueness. What’s more, these physical-layer signals do not rely on securing communication channels and computing them “comes for free” (the physics of the environment and dynamics of the swarm perform the “computation”). This is promising in addressing many of the challenges for securing distributed robot systems (i.e. limited onboard resource, scalability).

In sum, these physical-layer signals may be treated as trust primitives which can be used to build up more complex measures of trust, complementing established cryptographic methods.

Part 4: Towards protocols for physical-layer trust

Can we develop protocols which leverage this set of physical-layer trust primitives? In general, the aim is to compare observations for these primitives with expectations. We can treat the robot system as a black box in this sense.

Given an input into the black box, we can record the expected output. This can be simulated to a degree of precision (assuming we know the behaviour of the robots), or this can be observed by implementation on robots before deployment. The input perturbs the system: this can include directing robots to change their behaviour, for example. If a robot is compromised and unable to produce the correct behaviour, the resulting dynamics of the system overall should fail to produce the correct output for the observed trust primitives.

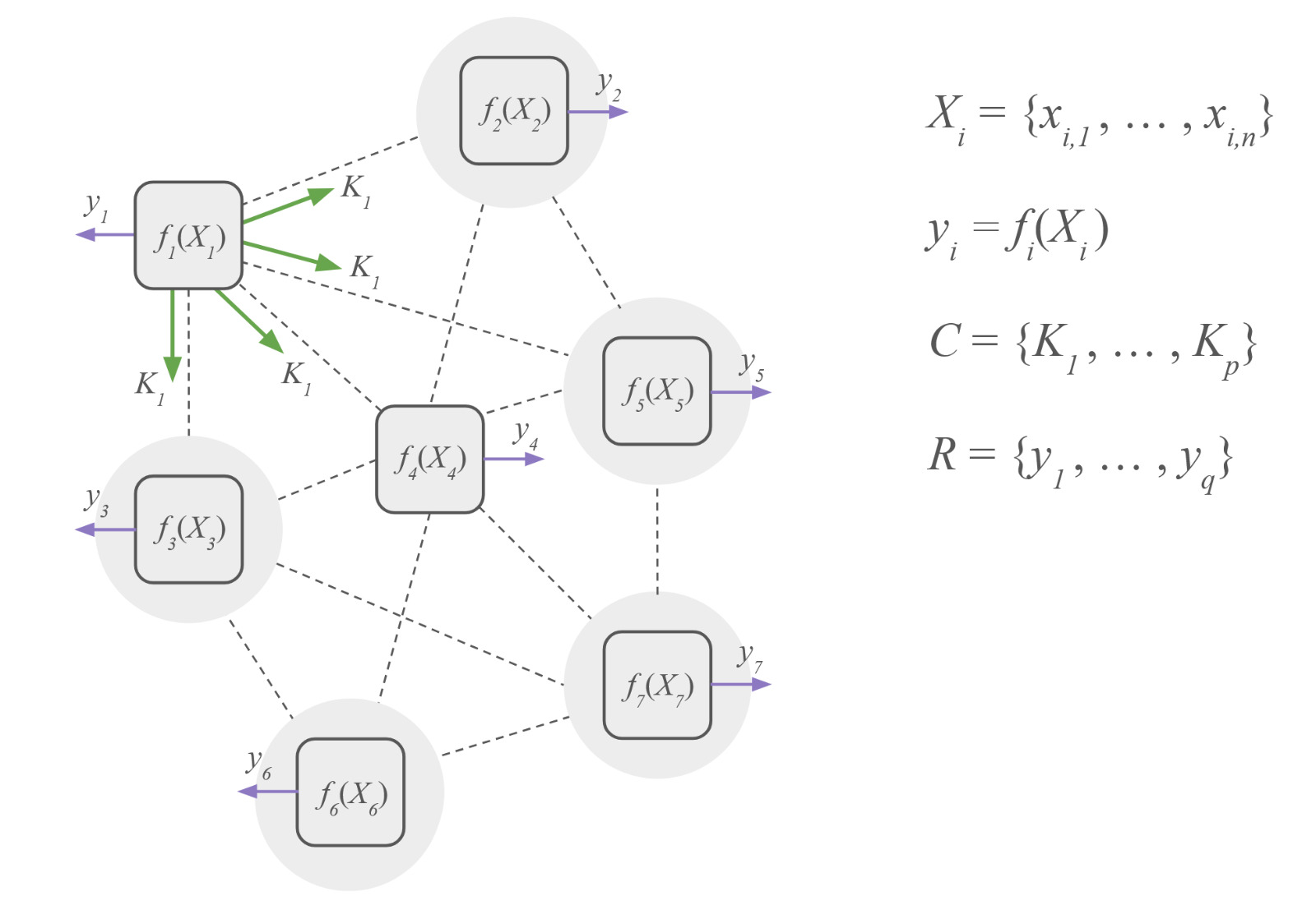

Figure 1: Grey circles represent nodes in the distributed system which may be a robot (as in a swarm) or a modular component in a single robot. The dashed lines represent physical interaction via signal propagation. Rounded square represent the components which take observations and can also perturb the system. These components may be implemented onboard a robot or embedded in the environment.

Figure 1 illustrates a conceptual framework for a distributed network of components which can perturb the system and make observations for given trust primitives. These components can be implemented on the robots themselves or embedded in the environment. We have a set Xi of observed signals for the i-th component in the system. Each component Pi processes signals to produce a readout yi computed as some function f of the observed signals.

In the context of physical-layer trust, we are particularly interested in functions which depend on the physical interactions of the system. The conceptual framework here is intentionally broad to allow for many possible function implementations. The function may not be explicitly defined, but may be encoded as a robot controller for example, where actions are triggered for given sensor inputs.

Key takeaways:

For the purpose of cryptographic applications, this framework lends itself to the implementation of challenge-response protocols. Challenges are implemented as perturbations to the system which give rise to observable responses (of trust primitives). The non-linear dynamics of the system act as a "one-way function" which is hard to reverse-engineer[10].

Further research directions:

The uniqueness, precision and robustness of readouts need to be characterised across types of perturbations and trust primitives, and across types of systems (e.g. heterogeneous, swarms). See also reservoir computing as a paradigm for projecting inputs to a non-linear system to a higher-dimensional space[11].

Part 5: Towards subjective trust

Let’s consider a different kind of challenge: robots must maximise some joint utility measure of trust for the collective. Existing approaches seek to provide safety guarantees for the emergent behaviour of swarms using formal methods, for example[12]. However, these methods assume that the behaviour of robots is known or can be modelled. It’s also a computationally expensive approach which requires computation of global measures. However, we are interested in guarantees which are scalable, locally computable and applicable in real-time.

In terms of locally computable measures, intrinsic motivation utilities can be useful as proxies for trust. For example, “empowerment” quantifies the potential causal influence of an agent’s actions on the world it can perceive[13]. In other words: “how in control is an agent of its environment?”. Whilst methods for fault detection compare observations to an expected baseline of “normal”, intrinsic motivation is not dependent on an externally imposed criteria or prior knowledge. An agent can maximise its own empowerment for example, which impacts the empowerment of other agents. There is room to explore such utilities in distributed computation of trust, in combination with trust primitives. In this sense, a protocol for subjective trust might look something like asking the swarm to maximize empowerment for a human user for a given trust signal (e.g. movement). Ideally, the result is a behaviour which is inherently trustworthy from the human perspective.

Key takeaways:

Physical-layer trust primitives can be leveraged in learning trustworthy behaviours for robots in a distributed system. Observations from physical interaction (e.g. motion) may encode subjective trust which can be hard to quantify.

Further research directions:

Proxies for trust, which leverage physical-layer trust primitives, can be evaluated for properties such as scalability, local computability, and real-time applicability.

Conclusion

To quantify "trust", we've looked at measuring embodied interaction in the physical-layer of a distributed system. The potential for these trust primitives can be summarised as follows:

- Tamper resistance: signals are difficult to manipulate without detection.

- Efficient computation: signals propagate in the physical layer and the non-linear dynamics of the system performs "computation" for free.

- Subjective trust proxies: these signals may capture qualities of embodied interaction, related to trust, which are difficult to quantify.

Physical-layer trust primitives complement more established cryptographic methods by addressing some of the bottlenecks coming from resource-constrained hardware and scalability. The trust primitives identified can be combined with other sources of evidence to produce robust fingerprints for security and safety.

Some final words and thank you's...

First, thank you for reading this blog post on physical-layer trust!

I’ve very much enjoyed working on this project, especially learning about cryptography and security. It’s been shaped by and evolved through many on-going discussions.

This project has been a collaboration with Autodiscovery: thank you to Aron Kisdi and Wayne Tubby who supported this project with their expertise on robot hardware and real-world deployment. Thank you also to colleagues at TU Darmstadt for their insights and feedback on this project. Lastly, thank you to the TEE team at ARIA who are developing an awesome research programme and community, and for the opportunity to work on this pre-programme discovery project.

References and links

[1] Swarm Robotic Behaviors and Current Applications, Schranz, M. et al., 2020.

[2] Trustworthy Swarms, Wilson, J. et al., 2023.

[3] Swarm robotics: a review from the swarm engineering perspective, Brambilla, M. et al., 2013.

[4] A Survey on Security of UAV Swarm Networks: Attacks and Countermeasures, Wang, C. et al., 2024.

[5] Blockchain Technology Secures Robot Swarms: A Comparison of Consensus Protocols and Their Resilience to Byzantine Robots, Strobel, V. et al., 2020.

[6] A survey of modern exogenous fault detection and diagnosis methods for swarm robotics, Miller, O. G., & Gandhi, V., 2021.

[7] Towards Understanding the Impact of Swarm Motion on Human Trust, Abu-Aisheh, R. et al., 2025.

[8] Indirect Swarm Control: Characterization and Analysis of Emergent Swarm Behaviors, Vega, R. et al., 2024.

[9] Guaranteeing Spoof-Resilient Multi-Robot Networks, Gil, S. et al., 2017.

[10] Physical One-Way Functions, Pappu, R. et al., 2002.

[11]Swarm Reservoirs, CrossLabs, 2024.

[12] AERoS: Assurance of Emergent Behaviour in Autonomous Robotic Swarms, Abeywickrama, D. et al., 2023.

[13] Process empowerment for robust intrinsic motivation, Tiomkin, Stas. et al., 2025.